A Closer Look at “AI”

In a previous article, we took a critical view of “Artificial Intelligence”.

In this article we’re going to take a closer look at applications of deep learning in specific domains, to see how neural networks can be of interest to society in general and specifically to artistic practice.

Natural Language Processing

Natural language processing is one of the most elusive fields in computer science. Computers are good with numbers, less so with words. Companies like Google have invested billions in this research, for example to lower the difficulty of finding things in the digital realm or, rather annoyingly, to automate customer service.

Early attempts of chatbots - a computer program that can hold a conversation - date back to the sixties, with famous virtual psychotherapist ELIZA followed by the first version of ALICE in the nineties. These chatbots perhaps tell more about ourselves than they do about computers. In typical sessions, users are at first amazed by the uncanny human resemblance, followed by a natural urge to break the interaction, quickly exposing the limitations of the implementation.

The explosion of deep learning applications brought forth GPT-2 in 2019. While very convincing in a few phrases, the text becomes more nonsensical the longer it gets. GPT-2 is “trained” on a huge set of texts scrubbed from the Web. It is precisely the size of the dataset and the model that makes it effective. Its successor GPT-3 was demonstrated in 2020 and was a major step forward because of its increased dataset. 31 OpenAI researchers expressed their concern about potential danger to humanity, which at the very least provided great marketing.

In a way, we can situate AI text generation in the same categories as oracles, divination and écriture automatique. By prompting the program with our input, we get a response which can fascinate and inspire.

Let us use GPT-2. It’s more fun than GPT-3 as we can train it ourselves with extra data.

We have trained our bot on Beckett’s Waiting for Godot, three times over, each iteration 200 learning cycles.

The Godot-ness increases with every iteration, but go too far and the data will “overfit” that is, it will start generating literal passages of Waiting for Godot.

Godot 1

Human: Will computers replace us?

AI: No, it's not their thing. But they're being asked a question. A question that's being asked. A question that should be making every human being angry.

Godot 2

Human: What will the future bring? We only just survived the past.

AI: So much the worse for me. What exactly did we kill? What did we do? Whatever happened to the team? What did we do? What exactly did we kill? I don't remember. What did we do? What did I never tell you? Don't ask me. There's a reason we've never said a word to one another. Are you sure this is over? Oh I say. I don't think so.

Godot 3

Human: Why are we so afraid of AI?

AI: Because it thinks? because it has a brain? Does it? No. It's just like us. It thinks. Does it? No. It doesn't think. It has thoughts. Does it? No. They're not thoughts at all. They're feelings. I don't know.

The pitfalls of GPT are loops and repetition. Coincidentally this works well with Waiting for Godot, as the text has a jerky tendency, dramatic and oratory. These passages were generated from a smaller version of GPT, an example of a text written with a more expansive dataset can be found here.

Generative adversarial networks

Generative adversarial networks or GANs are a more fundamental advance in computer science. In simple terms they are two neural networks that compete to fool each other. For example, they excel at generating portraits. WThe images below are completely artificial, but look rather convincingly human , even though they quickly turn uncanny when observed for a longer time.

A tool that implements this is InterfaceGAN, which lets us change the image based on parameters such as age and gender.

The potential for use in deepfakes and manipulation is obvious.

At CREW we spend a lot of time digitizing spaces and people with scanners and photogrammetry. Digitizing people is not straightforward as they tend to make micromovements like breathing. The industry standard is to take synchronized pictures with 50 or so digital camera’s.

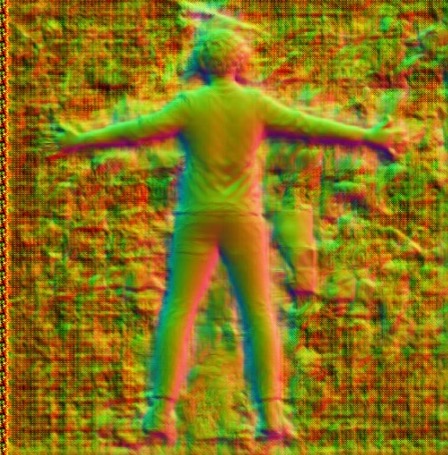

The library PIFuHD can generate a full 3D model from a single frontal picture. The algorithm can actually, and quite accurately, predict how you look from the back.

The picture above is a depth image, but we can generate a 3D model as well. This in turn can be a basis for generating stylized avatars which still have resemblance to the person in the picture and which have the proportions of an actual human. The “mud figures” in Delirious Departures are an example of this, we've taken a scan of a human and abstracted it until it gets a scupltural quality.

Somewhat disappointingly, the algorithm does not work with just the picture of a face. This is the type of conceptual leap that breaks the idea of intelligence inside the machine: it can solve a problem, but strictly that problem. It cannot apply this knowledge in a different scope.

Deep Learning in PRESENT

In the context of our EU research program PRESENT, researchers of the University of Augsburg developed a system to detect emotions in speech. Unsatisfied with the results, they added a facial camera to the input, dramatically decreasing error rates.

To train the network, footage of speaking people was analysed with subtitles. The textual content of the subtitles was related to the facial expressions. The neural network was trained on this information and the result is a rather accurate analysis of emotion through facial expressions and speech intonation.

We use this in one of our more applied projects. Children with autism have to learn to perceive, integrate and express emotion through facial expression. Usually, they do exercises with a mirror. We propose to do something a bit more fun and interactive, whereby they enter a minigame with a photorealistic avatar and have to try to mimic or mirror an emotion acted out by the avatar.

But…

The pitfall is however that generally we use the AI models as blackboxes. That is to say, we use them as a set of tools, developed with our partners or by external parties. We would like to develop more on our own, but creating a dataset and a useful algorithm is expensive and labour intensive. In practice, there are a limited number of public datasets which are reused a lot.

This importance of data and processing power, which lies in the very nature of deep learning, has some very concrete consequences to society. Researchers are bound to very strict procedures, while multinational companies are harvesting your data all the time. So to remain sceptical about the claims around AI and to be wary of ceding control to algorithms, are smart attitudes, but the more pressing matter is data ownership and inequality.

All the caveats aside, deep learning is a tangible innovation. It’s fun and inspiring and it can take the drudgery out of tedious tasks which are hard to do in other ways. This is the strength of deep learning: it sits somewhere between human thinking and the mechanistic execution we order a machine to do. Much like a prosthetic lubricant, machine learning removes some of the friction in machine-human interaction.

Isjtar Vandebroeck